Startup Weekend 2022

Victor Evangelista / Nov 7, 2022

Web Development • 7-minute read

YouChef blew everyone’s socks off, and we won the People’s Choice Award!

This past weekend, I had the pleasure of attending Startup Weekend, by TechStars in Las Vegas, NV. It is so fun to challenge yourself to help build a startup in only 2 days.

This has been one of my first in-person ventures back out into the tech community since my life-changing illness in June, where I became almost 100% paralyzed in Mexico.

After several months of recovery, rest, and physical therapy, it was time to get back out there. Participating in a hackathon would give me the opportunity to flex my skills, meet incredible people, and overcome the discomfort of people seeing my physical condition.

Pitching Ideas and Picking Teams

Presenting on stage with my cane just in case

On Friday night, everyone got the opportunity to go on stage and pitch an idea, so long as it wasn’t already a functional business you were running prior to the event.

I enjoyed the challenge of pitching on stage to an audience of over 50 people once again. With my new neurological condition though, believe me- my confidence had plummeted.

“Just Do It”, from Phil Knight’s book “Shoe Dog: A Memoir by the Creator of Nike”, is the phrase that played in my mind. I pushed through, even though I was nervous, even if I needed a cane to go on stage, and even though my voice is still recovering.

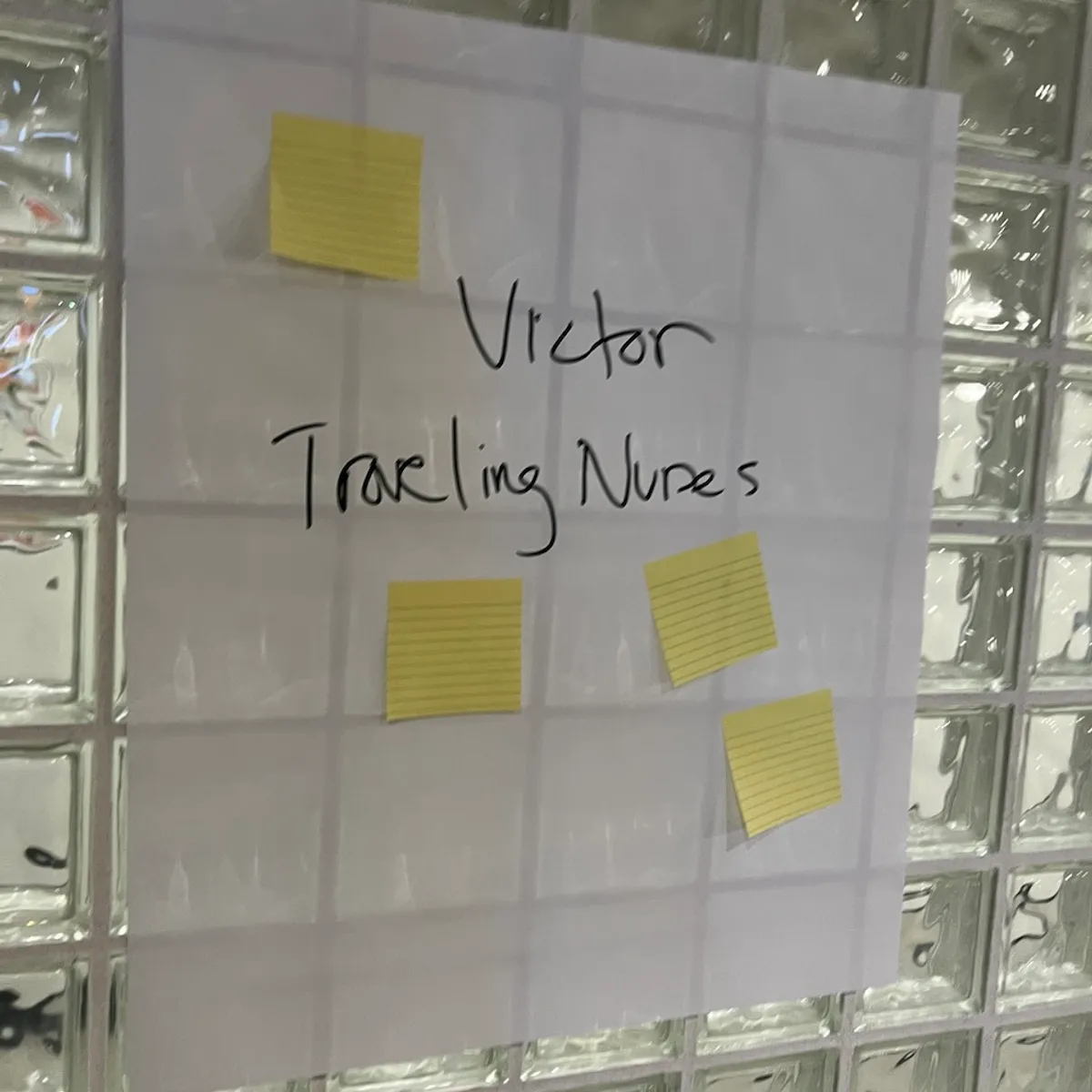

I was happy to find my travel nurse tailored business idea received multiple votes!

The idea was to balance the workforce needs of hospitals struggling to meet demand across the country. We’d even enable private patients to match with travel nurses via a mobile app.

The business plan could involve partnering with insurance companies, a paid CRM, and more.

As exciting as it was, my initial team and I ultimately decided to join other projects we were interested in. Everybody’s ideas were that exciting. I chose YouChef!

The idea behind YouChef is an mobile app that uses your camera to scan your fridge for food using machine learning. It labels and quantifies that food as accurately as possible.

With the user’s food inventory, we can help them prevent food wastage by notifying them before expiry dates and even provide them with fun recipes they can already prepare!

That’s just the tip of the iceberg. You could imagine where it could go from there: cooking instructions, options for recipes from different cultures, partnerships, etc.

Recently, machine learning has been a large interest of mine. I’ve spent time tinkering with various ways the technology could be used to improve our lives and add exciting new features to the products we already use day to day.

In the end, it was a no-brainer to join. The team looked awesome and needed someone willing to tackle the machine learning aspect of the product. Owning that feature sounded like the most fun and challenging project to work on in less than two days.

Getting to Work

Victor Evangelista (left), Christen Smith (middle), and David Duval (right) all hunched and focused

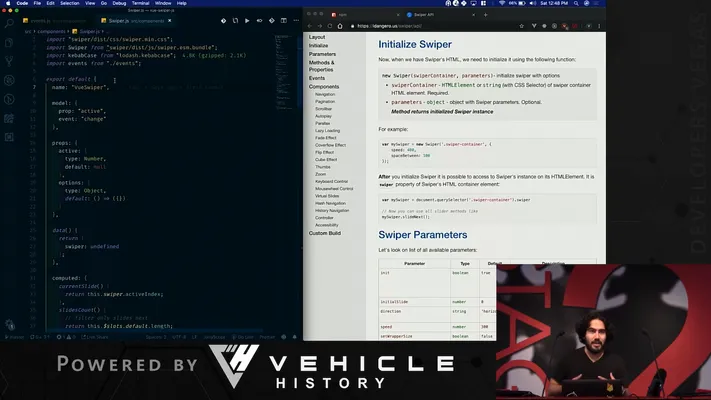

We worked our asses off, collaborated, and had fun doing it from Saturday morning until Sunday’s 5pm presentations. Christen Smith and Vianey Lozoya focused on marketing/research, Allister Dias developed a business plan, Manny Costa spun us up a Wordpress landing page, and David Duval and I got to work on building the damn thing!

David would take on developing an MVP mobile app using React Native. It needed to use the phone’s camera, encode an image in Base64, and display food inventory. As a plus, we wanted to show recipe ideas and draw nice boxes around detected foods for the user to see.

I took on finding a machine learning solution for our main problem and getting it up and running reliably. After spending valuable time researching, I finally opted for using Google’s Cloud Vision AI. It provided a pretrained model for us to work with and serve as a reliable foundation. In the full version, we would combine pretrained models with our own, but there was just no time for something like that in our situation.

It wasn’t plug and play. The Vision API doesn’t provide classification/categories for the objects it detects, so if we took a picture of a fridge, we’d receive everything: the shelves, the lightbulb, mason jars, and of course the food items it could detect.

From here, it was a lot of testing, trial and error, and sacrificial offerings to the AI gods. With so little time, how could we get a functional demo to present live up and running?

The solution I came up with was to cross reference results with our own database of valid food items and take note of questionable results. If we weren’t sure about an item, it was round two of processing for that item. In came Google’s Natural Language AI. It wasn’t foolproof, but we filtered results down to the most relevant and most certain food items. Now we could mark items for the user to correct on the frontend in an intelligent way.

Sunday morning, David was still working his butt off with mock data while I finished connecting the frontend to our backend service. We had the camera functionality, a food inventory list from mock data, and mock data recipes.

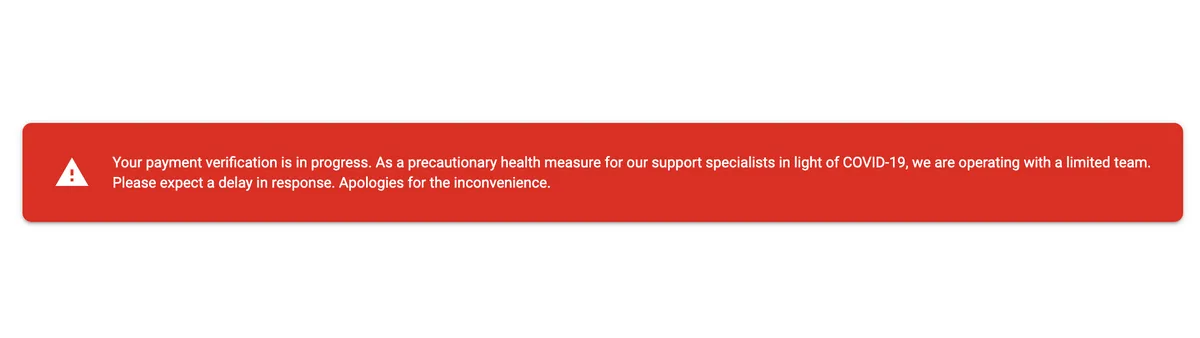

Time to bring it to life! Oh wait, Google detected suspicious activity. I don’t know if it was because I was hitting the server too often or what exactly transpired, but I didn’t have time to figure it out. Our live presentation was in jeopardy.

Even after providing Google with pictures of government ID, payment method, a urine test and a blood sample, we were still locked out. With quick thinking, we activated a new account, set it up all over again, and brushed the event off.

We wouldn’t have time to implement the bounding boxes on the fridge image, or to use real recipe data, but we were almost ready. It’s a shame too, because Jordan Plows showed us a cool way we could generate recipes using a model from Hugging Face.

It was now time for polishing, sanity checks, and ensuring everything worked reliably and consistently. We also needed food items to demo with! Christen, Vianey, and Allister ran to Whole Foods and got the goods- some fruits and vegetables.

After some last minute tech checks on stage, we were as ready as anyone could be.

Our Judges

- Kris Tryber - Co-founder of EXO Freight - Funded by Y Combinator

- Kegan McMullan - Managing Director of gener8tor - Accelerates startups

- Shubhi Nigam - Ex-founder and Senior Product Manager at Carta

- Bill Eichen - Partner at Gimmel Fund - HDMI Leader & DisplayPort USB-C Creator

With such qualified and experienced judges, the pressure was on. There were 7 total startups full of intelligent people, so the competition was fierce.

The Presentation

Without further ado, here’s what you’ve been waiting for! Unfortunately, the video is slightly overexposed to see some aspects of the demo, but you’ll still see it went very well and our demo detected most of the food items accurately!

The Result

According to a coordinator, YouChef won the popular vote by a landslide, and the judges loved us! We managed to secure second place out of SEVEN startups.

Phew. Now I need an actual weekend.